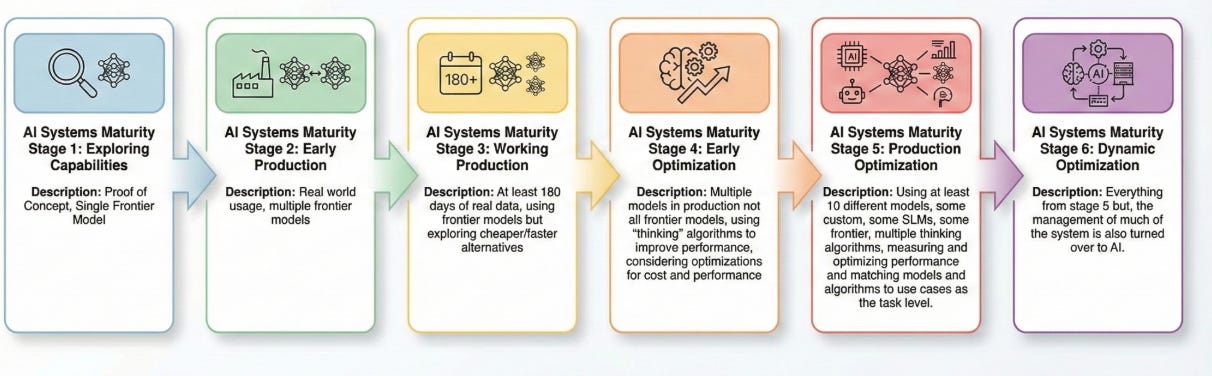

The Comfortable Illusion: Why Moving From Stage 1 to Stage 2 of AI Maturity Is So Hard

Part 1 in a Series of Posts About AI Maturity.

The “AI Lab” is a seductive place. It’s where models always seem to behave, demos look like magic, and the potential for ROI feels infinite. But for many organizations, the lab is also a dead end. According to recent 2025 data from IDC, a staggering 88% of AI Proofs of Concept (POCs) never reach production deployment. This “POC Trap” creates a dangerous “comfortable illusion”—a sense of progress that masks a complete lack of operational readiness. The jump from exploring capabilities to early production is the hardest, yet most critical, transition in AI maturity.

The Reality of Stage 1

Exploring Capabilities

Stage 1 is the honeymoon phase of AI adoption. At this level, organizations are typically “model-loyal,” leaning heavily on a single frontier model like GPT-4o or Claude 3.5. These initiatives are often driven by a lone “AI champion” or a small innovation team tasked with finding the “art of the possible.”

In Stage 1, success is measured by impressive demos, not business outcomes. Because there are no real users and no production data, there is zero accountability for edge cases or long-term costs. It’s an environment where the stakes are low and expectations are flexible. If the model hallucinates during a demo, it’s a “learning moment”; in production, it’s a liability. This stage feels like progress, but it’s often just a series of expensive experiments without a destination.

What Stage 2 Actually Looks Like

Early Production

Transitioning to Stage 2 is like taking a prototype car out of the wind tunnel and onto a rain-slicked highway. Suddenly, the environment is no longer controlled. Real users are interacting with your AI in actual workflows, and they will use it in ways your innovation team never imagined.

At this stage, you are no longer model-monogamous; you are likely evaluating multiple frontier models for cost, latency, and accuracy. This is where you first encounter failure at scale. Hallucinations that were rare in testing become daily occurrences when processing thousands of requests. Costs shift from a theoretical API budget to a tangible line item that CFOs start questioning. Most importantly, accountability shifts. AI is no longer an “interesting experiment”—it’s a tool that people rely on to do their jobs, and it needs to work.

What Drives the Transition

The push into Stage 2 is rarely a natural evolution; it’s usually the result of a “forcing function.”

Business Pressure: Leadership is tired of hearing about “potential.” They want to see the ROI promised in the initial pitches.

User Demand: Sometimes, a POC is so successful that employees start “shadowing” it into their daily work, demanding official access and support.

Competitive Anxiety: Seeing a rival launch a production-grade AI agent creates an immediate urgency that labs cannot replicate.

Warning Signs You’re Stuck in Stage 1

If you find your organization repeating these patterns, you’re stuck in the “comfortable illusion”:

You have a dozen POCs but zero live deployments.

You’re rotating through new models as they’re released without a systematic way to compare them.

“We’re still exploring” has become your permanent status update.

A recent 2025 MIT study found that despite $30-40 billion in enterprise investment, only 5% of AI initiatives are producing measurable returns. This is the hallmark of Stage 1 stagnation.

Key Questions Before Making the Leap

Before you move into the “uncomfortable reality” of Stage 2, you must be able to answer the following questions. If you can’t, you aren’t ready for production.

On Readiness:

Do we have a specific workflow with measurable outcomes (e.g., reducing ticket resolution time by 20%), or just a vague “use case”?

Who owns the model performance once it’s in the wild?

What is our tolerance for “failure,” and do we have a human-in-the-loop (HITL) system to catch it?

On Infrastructure & Economics:

How will we monitor for “drift” or declining accuracy over time?

Can our infrastructure support swapping models without rebuilding the entire application?

What is our actual cost per transaction? Gartner predicted that 30% of GenAI projects will be abandoned after POC by the end of 2025 specifically due to escalating costs and unclear value. My guess is when the data is tallied it will have been higher.

On Governance:

Who is responsible for reviewing outputs before they reach a customer?

What is our strict policy on what data can be used in prompts?

Making the Move

To cross the chasm between experimentation and production, you need a surgical approach rather than a shotgun strategy.

Pick ONE high-value workflow: Don’t try to scale five experiments at once. Choose the one with the highest “pain point” and clearest metric.

Build Evaluation Infrastructure First: You cannot manage what you cannot measure. Set up automated “evals” to test model updates against your specific business data.

Plan for Multi-Model Testing: Design your architecture to be model-agnostic from day one so you aren’t locked into a single provider’s pricing or limitations.

Redefine Success: Move past “does it work?” and start measuring user adoption, latency, and cost-to-serve.

Closing

The gap between Stage 1 and Stage 2 is where most AI initiatives go to die. It is the filter that separates the “AI-curious” from the “AI-mature.” Moving into production is messy, expensive, and often reveals that your initial assumptions were wrong—but it is the only way to build a foundation for real competitive advantage.

Stage 2 isn’t the finish line; it’s just the beginning of true optimization. But you can’t optimize what isn’t live.

Honest Assessment: Look at your current AI projects. How many are actually serving real users today? If the answer is zero, you’re still in the illusion.

A note on Neurometric - if you are moving beyond stage 1 and you care about model performance, let our tool automatically analyze your workflows and find the best model.